While adding a recent feature to our Kubernetes compute platform, we had the need to mutate newly-created pods based on annotations set by users. The mutation needed to follow simple business rules, and didn’t need to keep track of any state. Surely there must be a canonical solution to this simple problem? Well, sort of.

There are powerful frameworks like Kubebuilder which address the many aspects of writing Kubernetes admission controllers. We had simple needs, however, and decided to write our own stateless web service that replies to POST requests with a bit of JSON.

When I first heard about Kubernetes admission controllers a few years ago, it took me a moment to wrap my head around them, and I didn’t think that I would ever be able to write one from scratch. However, when boiled down to its core elements, the complexity fades away, and today we’ll look at how to write a Kubernetes admission webhook in Go with minimal dependencies. This illustrates how admission webhooks work and offers a lightweight solution to real problems. This blog post can be consumed on its own; however, the source code has been made available at https://github.com/slackhq/simple-kubernetes-webhook and is fully runnable on your local machine using a few make commands. I encourage you to run it yourself, explore the code, deploy some pods, and experiment with the webhook!

A Kubernetes admission what?

First let’s have a look at the definition in the official docs:

What are admission webhooks?

- Admission webhooks are HTTP callbacks that receive admission requests and do something with them. You can define two types of admission webhooks, validating admission webhook and mutating admission webhook. Mutating admission webhooks are invoked first, and can modify objects sent to the API server to enforce custom defaults. After all object modifications are complete, and after the incoming object is validated by the API server, validating admission webhooks are invoked and can reject requests to enforce custom policies.

In other words, an admission or mutating webhook is a web service that the Kubernetes api-server can be configured to contact when selected operations occur on selected Kubernetes resources. In the case discussed here, when pods are created the webhook inspects said object and can either allow or reject the request (validation), or modify it by returning a patch (mutation).

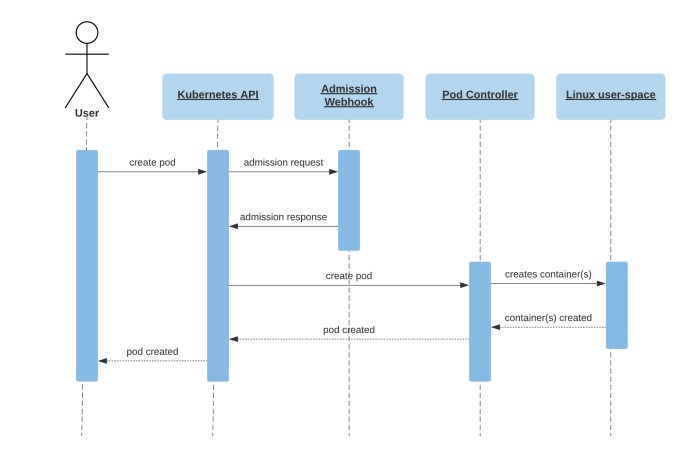

Figure 1 is a simplified sequence diagram showing the flow of a user creating a pod, where an admission webhook has been configured to receive CREATE operations on pods resources. Note that users usually create deployments, jobs, or other higher-level objects which result in pods being created and follow the same flow as pictured. For a more in-depth explanation, see A Guide to Kubernetes Admission Controllers on the Kubernetes blog.

We covered admission webhooks but they’re often conflated with admission controllers. What is a controller? Back to the docs…

Controllers

- In Kubernetes, controllers are control loops that watch the state of your cluster, then make or request changes where needed. Each controller tries to move the current cluster state closer to the desired state.

- A controller tracks at least one Kubernetes resource type.

An admission controller, then, is an admission webhook that also acts as a controller. For example, the built-in Pod controller controls underlying pod resources by keeping track of their state and taking actions when needed. It is common to build admission webhooks controlling user defined custom resources (CRDs) but controlling resources isn’t required to build a functional admission webhook. Today we’re going to demonstrate how to build a validating and mutating admission webhook that has no controller capabilities: it simply takes in admission requests and returns admission responses synchronously with no side-effects.

simple-kubernetes-webhook

During a recent project at Slack, we had the need for injecting tolerations to pods when they get created. I looked for solutions online and mostly found resources on how to create admission controllers using Kubebuilder or Operator SDK. While those are very powerful frameworks, we didn’t want or need complex software with many dependencies and a host of features we didn’t need (such as controller capabilities or CRDs management).

Coming from a systems engineering background, software development hasn’t been my strongest skill over the years. However, since I joined Slack’s Cloud Engineering team, I’ve had the chance to work on an array of different internal tools and services, mostly written in Go. And so, with the support of my team and the help of more experienced colleagues, we wrote a lightweight Go web server and forked it to provide it as open source: slackhq/simple-kubernetes-webhook. Follow the README instructions to run a kind-powered Kubernetes cluster and a simple admission webhook on your local machine!

Kubernetes configuration

Let’s take a look at setting up an admission webhook in Kubernetes. We’ll look at the code of the webhook itself in the next section.

Webhook deployment

Our admission webhook is a service that runs in-cluster, so it’s is a regular Kubernetes deployment:

apiVersion: apps/v1

kind: Deployment

metadata:

name: simple-kubernetes-webhook

namespace: default

spec:

selector:

matchLabels:

app: simple-kubernetes-webhook

template:

metadata:

labels:

app: simple-kubernetes-webhook

spec:

containers:

- image: simple-kubernetes-webhook:latest

name: simple-kubernetes-webhook

volumeMounts:

- name: tls

mountPath: "/etc/admission-webhook/tls"

volumes:

- name: tls

secret:

secretName: simple-kubernetes-webhook-tlsKubernetes requires a Service to communicate with Validating or Mutating webhooks:

apiVersion: v1

kind: Service

metadata:

name: simple-kubernetes-webhook

namespace: default

spec:

ports:

- port: 443

protocol: TCP

targetPort: 443

selector:

app: simple-kubernetes-webhookKubernetes also requires communication to webhooks be encrypted, so we’ll use a Secret to store our TLS certificate and its corresponding private key:

apiVersion: v1

kind: Secret

metadata:

name: simple-kubernetes-webhook-tls

type: kubernetes.io/tls

data:

tls.crt: LS0t...

tls.key: LS0t...The Kubernetes API server will only use HTTPS to communicate with admission webhooks. To support HTTPS, we need a TLS certificate. You can either get a TLS certificate from an externally trusted Certificate Authority (CA), or mint your own and save the CA bundle for the next section. Make sure that the SubjectAltName (SAN) is set to the service hostname (which contains the deployment name and the namespace) such as simple-kubernetes-webhook.default.svc.

Webhook Configuration

Now that we have a running deployment, we need to tell the Kubernetes API server to send requests to it when some events like pod creations happen. This is done by applying either a ValidatingWebhookConfiguration or a MutatingWebhookConfiguration object to the cluster. Let’s look at the ValidatingWebhookConfiguration:

apiVersion: admissionregistration.k8s.io/v1

kind: ValidatingWebhookConfiguration

metadata:

name: "simple-kubernetes-webhook.acme.com"

webhooks:

- name: "simple-kubernetes-webhook.acme.com"

namespaceSelector:

matchLabels:

admission-webhook: enabled

rules:

- apiGroups: [""]

apiVersions: ["v1"]

operations: ["CREATE"]

resources: ["pods"]

scope: "*"

clientConfig:

service:

namespace: default

name: simple-kubernetes-webhook

path: /validate-pods

port: 443

caBundle: LS0tLS1CRUdJTiBDRVJUSUZJQ0FURS0tLS0tCk1JSUMzREND...In the rules section, we define which events we care about (CREATE) on which resources (pods). In the clientConfig, we define the service endpoint which is our simple-kubernetes-webhook service running in the default namespace and receiving https requests on the /validate-pods path. Notice the namespaceSelector section: we’re enabling this webhook only on namespaces with an admission-webhook: enabled label. The webhook server itself will need to run in a namespace that isn’t subject to the webhook, because otherwise we’d have an unsolvable dependency loop any time one of its pods isn’t running.

We also deploy a very similar MutatingAdmissionWebhookConfiguration, and that’s all we need to set up the flow described in Figure 1!

A simple Go web service

The following code excerpts have been edited to better fit the blog format: error handling and other statements have been removed. The unadulterated code is available on the github repo.

Our main application consists of a standard Go http server:

func main() {

// handle our core application

http.HandleFunc("/validate-pods", ServeValidatePods)

http.HandleFunc("/mutate-pods", ServeMutatePods)

http.HandleFunc("/health", ServeHealth)

logrus.Print("Listening on port 443...")

logrus.Fatal(http.ListenAndServeTLS(":443", cert, key, nil))

}It handles requests coming in at /validate-pods and /mutate-pods, which match the client config paths in the webhook configuration objects.

Validating

We serve validation requests with the ServeValidatePods function:

// ServeValidatePods validates an admission request and then writes an admission

// review to `w`

func ServeValidatePods(w http.ResponseWriter, r *http.Request) {

in, err := parseRequest(*r)

adm := admission.Admitter{

Logger: logger,

Request: in.Request,

}

out, err := adm.ValidatePodReview()

w.Header().Set("Content-Type", "application/json")

jout, err := json.Marshal(out)

fmt.Fprintf(w, "%s", jout)

}Here we’re parsing an AdmissionReview request sent to the webhook by the Kubernetes API server: it’s a JSON object (documented here) that contains the pod resource itself as well as some extra metadata. Once parsed, we pass it to an instance of an Admitter from our admission package and get it to generate an AdmissionReview response, which we send back to the Kubernetes API server. An admission review response is a JSON document that contains the following:

{

"apiVersion": "admission.k8s.io/v1",

"kind": "AdmissionReview",

"response": {

"uid": "<value from request.uid>",

"allowed": true

}

}If allowed is set to true, the pod deployment can continue. If set to false it will not and the user will get an error message; it is customisable by informing response.status with a code and a message, here’s an example:

{

"kind": "AdmissionReview",

"apiVersion": "admission.k8s.io/v1",

"response": {

"uid": "9e8992f7-5761-4a27-a7b0-501b0d61c7f6",

"allowed": false,

"status": {

"message": "pod name contains \"offensive\"",

"code": 403

}

}

}

The Admitter struct is an abstraction layer that produces an admission review response when either of its ValidatePodReview or MutatePodReview methods is called:

// Admitter is a container for admission business

type Admitter struct {

Logger *logrus.Entry

Request *admissionv1.AdmissionRequest

}

// MutatePodReview takes an admission request and validates the pod within

// it returns an admission review

func (a Admitter) ValidatePodReview() (*admissionv1.AdmissionReview, error) {

v := validation.NewValidator(a.Logger)

val, err := v.ValidatePod(pod)

return reviewResponse(a.Request.UID, true, http.StatusAccepted, "valid pod"), nil

}It uses an instance of a Validator struct. Validator has a ValidatePod method that validates pods using a list of objects implementing the podValidator interface:

// ValidatePod returns true if a pod is valid

func (v *Validator) ValidatePod(pod *corev1.Pod) (validation, error) {

// list of all validations to be applied to the pod

validations := []podValidator{

nameValidator{v.Logger},

}

// apply all validations

for _, v := range validations {

var err error

vp, err := v.Validate(pod)

}

return validation{Valid: true, Reason: "valid pod"}, nil

}Here there’s only one podValidator: nameValidator, which checks the name of a pod for unsavory strings and returns a boolean and — if the pod gets rejected — a reason.

Mutation

The mutation code path is very similar to the validation one, except this time the AdmissionReview response must contain a base64-encoded JSON patch of the desired modifications to apply to the pod resource. Such an admission review response looks like so:

{

"apiVersion": "admission.k8s.io/v1",

"kind": "AdmissionReview",

"response": {

"uid": "<value from request.uid>",

"allowed": true,

"patchType": "JSONPatch",

"patch": "eyJvcCI6ImFkZCIsInBhdGgiOiIvc3BlYy9jb250YWluZXJzLzAvZW52IiwidmFsdWUiOlt7Im5hbWUiOiJLVUJFIiwidmFsdWUiOiJ0cnVlIn1dfQ=="

}

}Where patch is a base 64 encoded JSON patch:

{"op":"add","path":"/spec/containers/0/env","value":[{"name":"KUBE","value":"true"}]}In the code you’ll find two objects implementing the podMutator interface:

mutations := []podMutator{

minLifespanTolerations{Logger: log},

injectEnv{Logger: log},

}injectEnv injects a KUBE=true environment variable into the pod (see above JSON patch), whereas minLifespanTolerations injects a set of tolerations to a pod based on a custom annotation (this works in tandem with a set of taints on Kubernetes nodes which we might dig into in another blog post!).

Tech debt and limitations of local testing

I omitted the fact that we already had a fully functional webhook: it is based on a very old Kubebuilder version, and upgrading it to a recent one would require such a rewrite that we decided to write the new webhook described in this post instead. The plan was to migrate features from the old to the new webhook, so that we could one day retire the old one, but as it happens we now have two webhooks to maintain and finding time and resources to finish the migration is our remaining challenge.

Update as of February 2023: We finally finished the migration and retired the old webhook!

It’s also worth mentioning that webhooks aren’t ordered: the Kubernetes api-server calls them randomly. Because in our case we cared about running the new webhook last, we added reinvocationPolicy: IfNeeded to the MutatingWebhookConfiguration, resulting in the webhook often getting called twice. This is one of the reasons why mutations should be idempotent!

Having a reliable suite of unit tests and the ability to run the webhook locally has made developing new features a lot easier but cannot be solely relied upon. I recently caught a few issues in our dev environment, one of which almost made it to prod. It’s important to have a prod-like environment that can be broken without repercussions, where new components and features can be safely tested.

Conclusion

I once heard Kelsey Hightower say that everything we do in tech is just data in / data out. Today we saw a prime example of this by building a Kubernetes mutating and validating admission webhook that receives an admission review request and returns an admission review response with no side-effects, no bloat, and no future maintenance headaches. I hope you enjoyed reading this and enjoyed playing with your own local deployment of the webhook!

Interested in taking on interesting projects, making people's work lives easier, or just building some pretty cool Cloud-native infrastructure? We’re hiring! 💼 Apply now